The Last Time You'll Write a Workflow.

Why Agentic AI Is Replacing Hardcoded Automation

What Are Agentic Workflows and How Do They Differ from Traditional Automation?

An agentic workflow is a software system powered by a large language model (LLM) - such as GPT-4o, Claude, or Gemini - that pursues a goal rather than executes a fixed sequence of steps. Instead of following pre-mapped instructions, an agent reads its environment, reasons about it, and decides what to do next at runtime.

Traditional automation runs on rails: every decision is pre-made, every step is hardcoded, every scenario anticipated in advance. Agentic systems are different. You define the goal. The agent figures out how to reach it.

| Traditional Automation | Agentic Workflows |

|---|---|

| Follows fixed instructions | Pursues defined goals |

| Breaks when conditions change | Adapts to new information |

| Designed at build time | Reasons at runtime |

| Static flowcharts | Dynamic decision-making |

| Executes your steps | Chooses its own steps |

According to Anthropic's published research on agentic systems, LLM-powered agents can break complex problems into smaller subtasks, call external tools, and revise their approach mid-execution - capabilities that fundamentally separate them from rule-based automation.

How Do Agentic Workflows Actually Work?

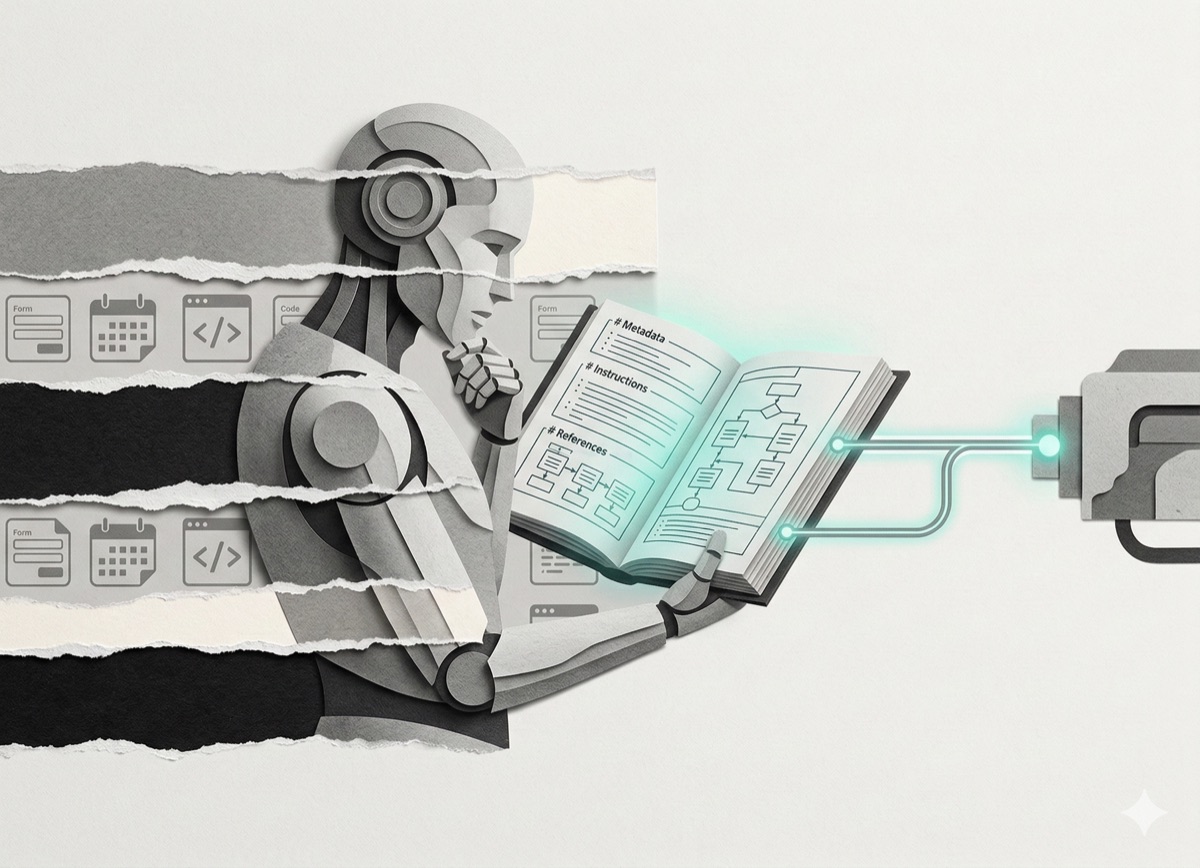

An agentic workflow is built from three core components working together:

- System prompts - Define the agent's role, constraints, and objectives

- Tools - Let the agent take actions: call APIs, read databases, write files, search the web

- Memory - Allow the agent to retain context across steps and sessions

The LLM functions as the reasoning engine. It reads available context, selects the right tool, executes an action, observes the result, and decides what to do next. This loop - perceive, reason, act - continues until the goal is reached.

Why this matters: Traditional systems require a human to anticipate every edge case at design time. Agentic systems handle novel situations because they reason about them, not just pattern-match against pre-written rules.

What Are the Main Agentic Architecture Patterns?

Supervisor Pattern

One orchestrator agent coordinates multiple specialized worker agents. The supervisor receives the task, breaks it into subtasks, delegates to specialists, and synthesizes results. This is hierarchical: the supervisor decides, the workers execute.

Best for: Complex multi-step tasks requiring coordinated decision-making across domains - such as research + writing + fact-checking pipelines.

Supervisors can be nested: a supervisor of supervisors handles the highest-level orchestration while sub-supervisors manage specialized clusters.

Swarm Pattern

All agents operate as peers. One agent starts the work, completes its portion, then passes control to another via a handoff tool. There is no central coordinator - agents communicate directly.

Best for: Distributed problem-solving where each agent owns a clearly bounded domain and can operate semi-independently.

You can mix both patterns. A supervisor can coordinate swarms; a swarm agent can itself be a supervisor for a nested cluster.

When Should You Use a Single Agent vs. Multiple Agents?

A single agent handles most tasks well. It maintains state, makes decisions, and executes across a full workflow. For tasks with a clear scope and a manageable number of tools, one agent is sufficient.

Use multiple agents when:

- The task involves too many tools or decision points for a single context window

- Different steps require genuinely different expertise or access levels

- Parallelization would reduce execution time significantly

- Failures in one domain should not cascade across the entire workflow

The tradeoff: Multi-agent systems gain capability but add architectural complexity - you need orchestration logic, clean handoff protocols, and distributed state management to make them reliable.

How Do You Evaluate an Agentic AI System?

Evaluation operates at three levels, and all three matter.

LLM Level

Test the model's raw output quality. Is it accurate? Coherent? Consistent across repeated prompts? Use structured prompts and validate responses against known ground truth.

System Level

Test component integration. Do agents coordinate correctly? Does state persist across steps? Are tool calls returning expected results? This is where most production failures occur.

Application Level

Test whether the full system solves the actual problem. Does it meet business requirements? Does it perform reliably at scale? Does it produce value users can act on?

Evaluation methods:

- Code-based tests - Deterministic assertions for predictable outputs; catches regressions

- LLM-as-judge - Use one model to evaluate another's output; scales for open-ended generation

- Human review - Highest quality signal; lowest scalability; essential for high-stakes decisions

Best practice: combine all three. Code-based tests handle regression detection; LLM evaluation scales assessment; human review anchors quality standards.

What Are the Biggest Challenges in Building Agentic Systems?

Hallucinations

LLMs generate confident-sounding outputs that are factually wrong. In agentic systems, a hallucination in one step propagates through subsequent steps.

Mitigation: Use retrieval-augmented generation (RAG) to ground responses in verified sources. Build explicit verification steps into high-stakes decision paths. Treat LLM outputs as hypotheses to be checked, not facts to be acted on.

Cost

Every LLM call costs money. Agentic systems make many calls - planning, tool selection, execution, verification. Token usage compounds quickly across multi-agent architectures.

Mitigation: Cache frequent results. Route simpler subtasks to smaller, cheaper models (e.g., Claude Haiku, GPT-4o mini). Monitor token consumption per workflow and set hard budget limits.

Debugging

When a multi-agent system fails, identifying the root cause is non-trivial. The reasoning happens inside the LLM; the logic is distributed across agents; the failure may not be visible until several steps after the cause.

Mitigation: Implement structured logging at every agent step. Use tracing tools (LangSmith, Arize Phoenix, OpenTelemetry) to reconstruct execution paths. Instrument handoffs explicitly.

Which Tools and Frameworks Support Agentic Workflow Development?

Several production-ready frameworks exist for building agentic systems today:

| Framework | Best For |

|---|---|

| LangGraph (LangChain) | Graph-based multi-agent workflows with explicit state management |

| AutoGen (Microsoft) | Conversational multi-agent systems with built-in debate/review patterns |

| OpenAI Swarm | Lightweight peer-to-peer agent handoffs |

| CrewAI | Role-based agent teams with supervisor orchestration |

| Anthropic Claude + tool use | Single-agent workflows with structured tool calling |

Model Context Protocol (MCP) - an emerging open standard developed by Anthropic - standardizes how applications expose tools and context to LLMs. MCP makes agents composable: tools built for one agent can be discovered and reused by another without custom integration work. As MCP adoption grows, it removes a major friction point in multi-agent system design.

How Should You Start Building Agentic Workflows?

Start with one agent, one task, one or two tools. Validate that the core loop works before adding complexity. Then iterate:

- Define a real problem - Not a demo. Customer service triage, data extraction, report generation, code review. Tasks that are repetitive but require contextual judgment.

- Build a single agent - Give it a clear goal, a system prompt, and the minimum tools it needs.

- Evaluate before scaling - Run the three-level evaluation before adding agents or tools.

- Add complexity incrementally - Memory, then multiple tools, then multiple agents. Each addition should be justified by a measurable improvement in outcomes.

- Monitor in production - Track token cost, error rates, and task completion per workflow. Set alerts. Agentic systems behave differently at scale than in testing.

What Is the Future of Agentic AI in Software Development?

The shift underway is from implementation to orchestration. Traditional software development means writing code that does a specific thing. Agentic development means writing systems that figure out what to do.

This changes the relevant skills:

- Prompt engineering becomes a core competency alongside traditional coding

- System design increasingly involves agent topology decisions (who talks to whom, when, with what memory)

- Evaluation design becomes as important as feature development

Developers who understand both traditional software engineering and agentic system design will build the systems that define the next decade of software. The two skillsets are complements, not replacements.

The practical implication: The question is no longer whether to use agentic systems. It is which tasks warrant them, which patterns fit the problem, and how to build them so they are reliable, measurable, and cost-efficient.

FAQ: Agentic Workflows

What is an agentic workflow?

An agentic workflow is an AI system powered by a large language model that pursues a defined goal by reasoning, selecting tools, and taking actions - rather than following a fixed sequence of hardcoded steps.

What is the difference between an agent and a chatbot?

A chatbot responds to inputs. An agent acts on them. Agents use tools, make decisions across multiple steps, maintain state, and work toward a goal without step-by-step human instruction.

What is the Supervisor pattern in multi-agent systems?

The Supervisor pattern designates one orchestrator agent to coordinate multiple specialized worker agents. The supervisor breaks tasks into subtasks, delegates to specialists, and synthesizes results into a unified output.

What is Model Context Protocol (MCP)?

Model Context Protocol (MCP) is an open standard developed by Anthropic that defines how applications expose tools and context to LLMs. It makes agentic tools discoverable and composable across different systems without custom integration work.

What frameworks are used to build agentic AI systems?

The most widely used frameworks include LangGraph (by LangChain), AutoGen (by Microsoft), CrewAI, OpenAI Swarm, and Anthropic's Claude with native tool use. Each has different strengths depending on whether you need graph-based state management, multi-agent conversation, or lightweight handoff protocols.